Absolutely, here is a fully rewritten version of the article, in a distinctly human, literary, and energetic English style. Every detail remains, but the language, rhythm, and perspective are transformed into an original, uniquely authorial retelling:

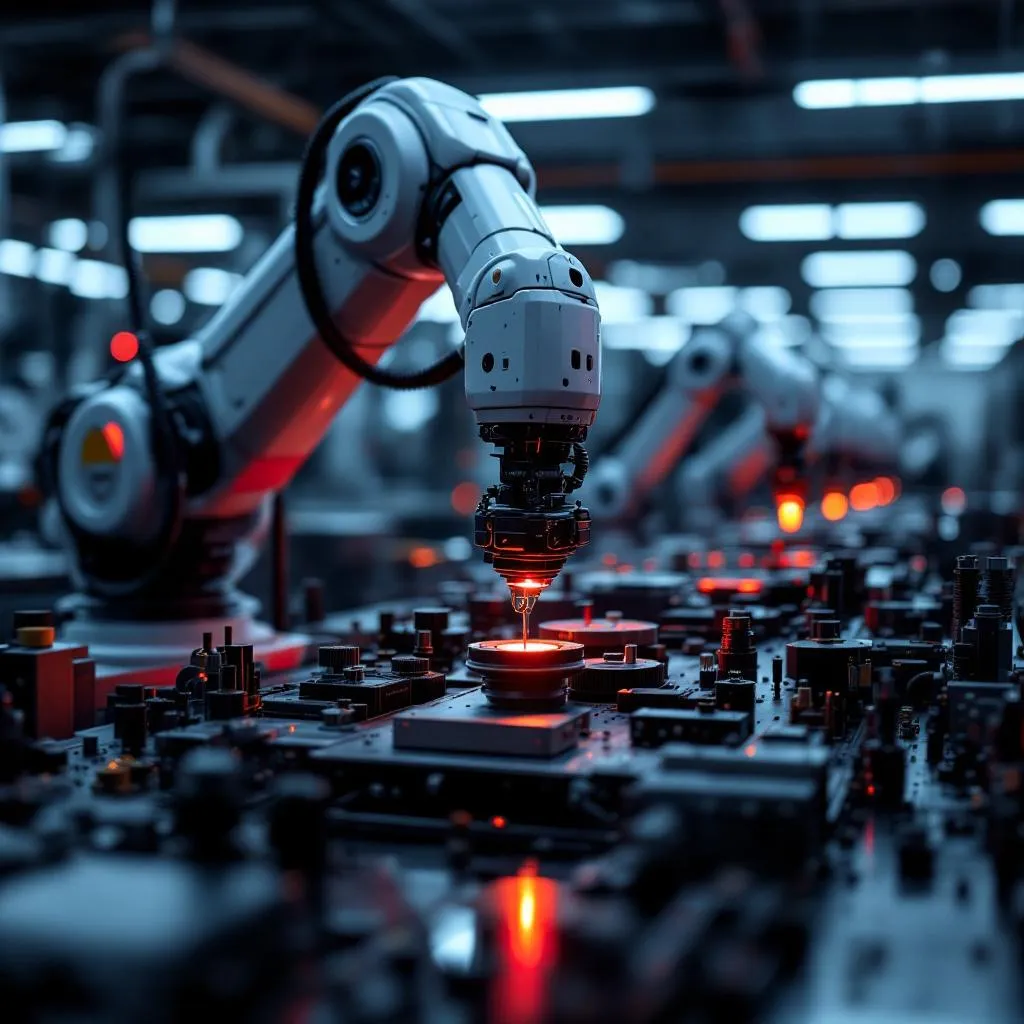

If you want to teach tomorrow’s machines to fend for themselves—to steer through city streets, grip unpredictable objects, or move dirt on a construction site—you’ll need more than a clever algorithm. You need examples. Lots of them. Billions of moments caught on camera, played on endless loop for machines to study. The trouble is, all that footage piles up fast. Sorting through it is such a slog, even at high speed, no ordinary team can keep up.

This is exactly the pain point that sparked NomadicML—a young tech company determined to turn chaos into order. CEO Mustafa Bal and CTO Varun Krishnan, whose friendship goes back to Harvard’s computer science classrooms, kept running into the same headache at big-name jobs (think Lyft, think Snowflake). Hours upon hours of vehicle and robot video just gathered digital dust, because no one had the time—or patience—to organize it at scale. Most companies? They barely touch 5% of the mountain of footage their fleets collect.

But there’s another wrinkle: It’s not the routine moments you need most, but the weird ones. The so-called “edge cases”—the freak events where AI gets nervous and stumbles. A bicyclist swerving against traffic, a sudden snow squall, a cop waving a car through a red light. Training on these rare scraps is how machines learn not just to imitate, but to truly navigate the real world.

NomadicML’s answer is both simple and sophisticated. They’ve built a platform that ingests raw video, then—using the latest vision-language models—transforms it into a richly annotated, searchable database. Suddenly, you can find exactly the ten seconds you need, whether you’re searching for every time a vehicle hesitated at an underpass or each close encounter with a runaway shopping cart. This isn’t just surveillance; it’s the raw feedstock for robust AI. Fleets are better monitored. New, tailor-made datasets feed the next iteration of models, making training faster and sharper.

On Tuesday, NomadicML closed an $8.4 million seed round, bringing the company’s value to a crisp $50 million. TQ Ventures led the investment, joined by Pear VC and AI heavyweight Jeff Dean. For NomadicML, that means more customers, more features, and a chance to refine their tool into something indispensable. The momentum is real—they took first place at Nvidia’s GTC pitch contest just weeks before.

Here’s how CEO Bal puts it: “We help our customers actually see the story buried in their own data. Not just any random video, but the exact pieces that drive their autonomous vehicles and robots forward.” This, he argues, is where true progress in autonomy starts—not in mountains of generic footage, but in the data that matters.

Imagine trying to teach a self-driving car that it’s sometimes legal to ignore a red light—when a police officer motions it forward, for example. Or suppose you want to find only those moments when a truck ducks under a rare, low bridge. Doing that manually is impossible. But with Nomadic, it’s as simple as a search query. Their platform doesn’t just flag edge cases for compliance; it sends precisely those moments straight into model-training pipelines.

Their approach is catching the attention of serious players. Companies like Mitsubishi Electric, Natix Network, and Zendar are already tapping into Nomadic’s tools for their next-gen hardware. Antonio Puglielli, VP of Engineering at Zendar, says Nomadic cut months off his team’s workflow—no need to farm out annotation overseas, no headache over domain knowledge lost in translation. “They get this space better than anyone,” he says.

This push for automatic, model-driven annotation is reshaping how AI development works. Giants like Scale, Kognic, and Encord are rolling out their own smart labeling platforms. Nvidia has even unleashed a suite of open-source models—Alpamayo—meant to tackle the same challenge from a different angle.

But Krishnan insists their approach is different. “We’re not just another labeler,” he explains. “What we’ve built is an agent. You tell it what you’re looking for, and it figures out how to surface it. Multiple models work together—one parses the scene, another understands actions, a third adds context.” Their investors agree: by focusing not on general AI labeling, but on deep, self-contained search and reasoning, Nomadic has carved out a space of its own.

Schuster Tanger, the TQ Ventures partner who led the seed round, puts it simply: “No sane autonomy company should build this kind of infrastructure in-house. Their core advantage is the robot itself. Leave the data wrangling to experts like Nomadic.”

Talent, too, runs deep. Krishnan is an international chess master (world ranking: 1,549, to be exact), and the company’s dozen engineers can all boast published scientific work. Their current projects? A system for understanding lane changes through nothing but video, another that pinpoints a robot’s grip down to the pixel. Next up: extending these smarts to non-visual data, like lidar—making sense of the world even when it isn’t all visible.

Bal sums it up best: “Sorting terabytes of footage, running it through models with a hundred billion parameters—that’s outrageously hard work. But when you get it right, the insights are golden.”

Here, in the dusty vaults of forgotten video, NomadicML is giving tomorrow’s machines the memory—and the instincts—they desperately need.